In a previous post about referrer spam in Google Analytics I hinted that a much bigger issue might stem from this fairly harmless attack. The problem I present in this article extends to basically all Google Analytics data, for any website out there, no matter how big or small. The problem is how to preserve the integrity of all of your Google Analytics data from cyber vandalism, malicious pollution and distortion.

Why are attacks possible and how they work?

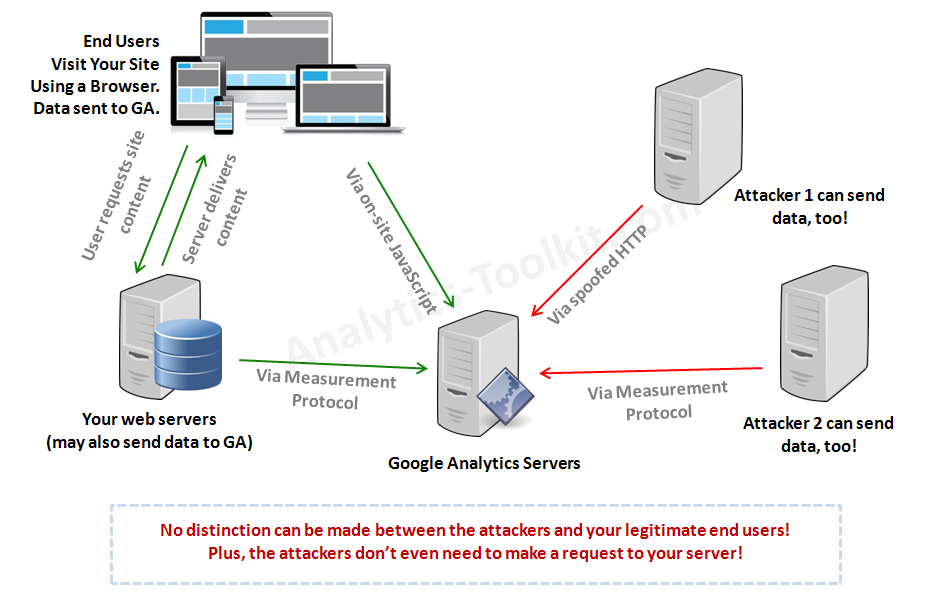

Many people assume that since a session is registered in Google Analytics that means somebody – a person, or at the very least a software robot, visited their site and that visit was then registered by GA. They are wrong. Google Analytics relies on the client’s browser to execute a certain piece of JavaScript that makes an HTTP request to the Google Analytics servers. The GA server then register a hit on your website and sets up a cookie on the user’s browser. However, the HTTP request can be sent by any device connected to the internet and as long as it contains all required parameters it will still register a hit with Google Analytics for your site. There is no authentication done during this process.

Furthermore, not so long ago Google announced the Measurement Protocol which provides an interface for a server to communicate to Google Analytics directly via an official protocol. This was possible and done before that, but was not documented. The purpose of the Measurement Protocol is to allow companies to connect different systems to Google Analytics and to collect offline data to accompany their website visitors’ data. Examples of systems that would use the protocol are phone-tracking solutions, POS systems, offline reservation systems, CRM systems, etc.

However, since there is no authentication in this whole process, virtually anybody can send hits for your website, just by knowing your UA-ID (UA-XXXXXXX-XX). Google analytics will accept the data as it is, putting it in your reports. This is exactly how referrer spammers are currently polluting your data with their “ads”. This is also what a potential malicious attacker would use to compromise your data integrity, effectively carriying out a data pollution attack.

Here is a scheme of how all this works:

Literally everything can be spoofed (forged) during these interactions. Hostname? Yes. Referrer data? Yes. Campaign tracking data? Yes. URL paths? Yes. Geo-location and IP address? Yes! And so on, you get the idea. This is well documented in the Measurement Protocol parameter reference guide.

The worst part of all of the above is that a spammer doesn’t even need to connect to your server in order to pollute your Google Analytics data. He’s connecting straight to the Google Analytics servers, thus leaving no trace of his activities on your side. This also makes it impossible to cut him off by standard security measures on your server, like firewalls and htaccess rules.

Possible Scenarios for Malicious Attacks on Google Analytics*

If something can be abused, it will be abused. It’s just a matter of time. So I believe it won’t be long before cyber vandalism or even malicious, targeted attacks on a businesses data integrity happen. Maybe they are already happening to unsuspecting victims.

The current attacks are by spammers that only try to “advertise” their websites/domain names. They want you to see them and go visit their site. Much like the early years of hacking when an attacker was sure to announce that he broke through your security, e.g. by defacing your site.

However, a malicious attacker can also spoof GA data in a way that is virtually undetectable by the owners of the Google Analytics accounts themselves. E-commerce data, goal conversions, events, pageviews, referrals. Everything. Every dimension can be forged and every metric can be influenced in a direction of choice.

This includes the AdWords data, the main reason we have Google Analytics in the first place. Fake visits with zero engagement and conversions can be sent, thus undermining the performance of the channel. This, of course, applies to any other channel.

Spikes and dips in traffic can be caused pretty much at will. They can be from targeted traffic sources, or to targeted pages, or spread across many sources and landing pages. Spikes and dips in metrics like bounce rate, session duration and pages/visit can be caused just as well. Analysts and marketers would waste tons of man-hours trying to understand what’s happening. Such activities may cause many people to lose their sleep and maybe even their jobs, in some cases.

I’ve come across cases where marketing companies sign up clients on a performance base fee, measured by traffic or traffic from a specific source. In such cases, manipulating the data will cause the business owner to pay for thin air and to wonder why people are coming to the site, but nobody is buying. And while businesses who have historical data and are tech-savvy will realize that this traffic is not like their usual traffic in a month or two, such a tactic can be especially devastating if applied to a new business with no prior history to compare with.

These are just the first scenarios that came to my mind and I’ve not given this too much thought (like an attacker would do).

How easy is it to carry out a data pollution attack?

Very. A single average-parameters machine with internet connectivity can pollute the data of a site with tens or hundreds of thousands of users per day. If you have a really huge site, causing significant damage might require a bit more, but the payoff is much bigger, too. An attacker need not worry about using proxies or bot networks, because it’s unlikely that Google with start investigating such cases any time soon.

In terms of technical difficulty: Getting your UA-ID takes 30 seconds at best. It would take an hour to write a good script that pollutes your data with one fake hit each time it’s run. It would take an attacker at most a couple of days to write a script that permutates the attack hits in such way that it’s impossible to distinguish those from legitimate hits from end users. Then this script can be used on an unlimited number of targets, or it can be sold to other attackers for profit.

What defensive options do we have?

Currently: none.

First: detection and prevention. An attack is impossible to detect before it happens. Thus it’s impossible to prevent it. Every parameter can be faked, including IP address, network location, hostname, which are usually used for detection in such scenarios. There is, as far as I understand it, no single piece of information that you can see in a Google Analytics report that can be used to detect an attacker or to distinguish an attack hit from a regular one.

The only option to detect an attack is after the fact, when you see unusual patterns in your data: unusual referrers, spikes in traffic, strange metrics, etc. And this only happen if you have solid historical data to compare with and other analytics tools (based on server logs and other backend stats) that may hint you to it as well. If the attack is carried intelligently enough you might not even be able to do that. Also, even when you can tell you are a victim, you still can’t distinguish the good data from the bad.

Second: clean up. Simply impossible, since you can’t distinguish the garbage data from your real data. Or at least I’m not currently aware of a way to do that.

Is a long-term solution even possible?

A long term solution for data integrity / data pollution attacks is possible only if an authentication mechanism can be used to authenticate who can send data to which UA-ID. However, the technical implementation of Google Analytics – no matter if it’s the usual JS tracking or via the Measurement Protocol ultimately relies on an unidentified client machine to send the HTTP request. Thus, authentication is impossible in the current setup. So any such request can be spoofed/forged and there is no fix for that that doesn’t require altering the core of the Google Analytics tracking functionality in a very, very significant way.

It appears Google knows about the issue from as early as 2013 (likely much earlier). One of the core people in the Google Analytics team, Nick Michailovski, replies to such a question in a Google Groups thread. His exact words:

We’ve looked at this. If you are doing a client-based implementation, as long as you can get the http request from the client, then you can spoof the request.

So unless Google decides to dramatically change the very core of the tracking part of GA, we are left to deal with the issue of referrer spam and malicious data pollution ourselves. And there is precious little we can actually do…

* The issue is not limited to Google Analytics only. As far as I’m aware other tracking solutions such as Omniture, Piwik, OWA, etc. also rely on a very similar technical approach. Thus, they are most likely all prone to the same exploit.

I site I work on has had malicious traffic being sent to it since early January. All direct traffic, though, no extra referrals.

All the direct traffic comes to the site, stays for a few seconds, and bounces. Completely distributed across geolocations and browsers (although older versions of IE & FF are mostly to blame).

It’s made our Analytics data that includes direct traffic completely worthless. Going from 150-200 direct visits a day to over 1,800 has swamped our data.

We’ve talked to everyone about this (including our Google AdWords rep and Google Analytics support for AdWords, our hosting company, and our internal IT department) and no one sees any pattern that can be identified and blocked.

Hey Nick,

Sorry to hear that you are already a victim. I was hoping that I’m speaking on a hypothetical issue, but I fugured if someone is already affected it would be good to have some guidance about what’s happenning. Sadly, I can’t propose any solution, as I say in the article itself. It would be a tough nut to crack, to say the least…

I’m thinking that maybe GA can deploy filters on their side, similar to the Fake Ad Click filters in AdWords, but I’m afraid that the problem of fake GA data is much worse than the problem of fake paid clicks, simply because of the much greater diversity in patterns.

Hi, great post, as is the other on filtering out referral spam.

I’m wondering if this means that we are going to need to rely on server-side log analysis tools to have any clue what is going on with our websites? Until GA retools with a validation system (which is likely to take some time) we can’t have any confidence that anything in GA is reliable.

Of course the web log analysis doesn’t give us nearly as much information, and is subject to fake referrals, bot traffic, and other ills, but the spammers will need to target actual sites, not blocks of GA account numbers.

What do you think? Are there good options for log analysis tools that are worth the time and $?

Hi Jay,

None that I can think of (on web log analytics tools). Also, I don’t see GA fixing the issue anytime soon. They’ve been aware of it for quite a while and it’s also very difficult/impossible on a technical level.

Georgi

Hi Georgi, You seem to have the best grasp on this issue , and the only one to not recommend the htaccess block. I always thought GA should have a way to FILTER OUT ALL referrer data via a report, or OPTION just to remove the garbage from the data set. Then , you could visit the referrer data if you wanted to to see the real traffic. But the direct spam mentioned above appears top devalue this approach. I believe this is why google recently recommended all websites use https…

Hi Christian,

Thanks. I’m not sure though that I follow you about “a way to filter out all referrer data”? There are multiple ways to do just that, if you so wish, but this is generally not desired. I have to assume I’m misunderstanding your point.

To my knowledge HTTPS does precious nothing to mitigate such issues.

“This includes the AdWords data”

I can easily see how utm parameters can be forged, but is the same true of the gclid?

No, gclid should be hard to forge, but it isn’t necessary to do that. Anybody can spoof AdWords traffic. Gclid is responsible for clicks/cost/campaign data, while sessions & everything else is in GA and thus – spoofable.

Ah, OK.

So if I was feeling naughty I would click an ad to get a valid gclid and then spoof the rest of the session?

What if you were to proxy requests to GA via your own servers, and (server-side) add a custom dimension or metric by which you could filter?

This would not prevent attackers from sending data, but it would allow you to filter that data.

If the attackers were to then try and send data via your proxy, you would possibly be able to identify the attacking IPs and block them.

Hey Tim. Interesting idea. That’s quite complicated, but it might work (I can’t quite imagine the whole setup in my head). Still won’t prevent a well-thought & targeted distributed attack but should take care of 99.99% of issues.

I’d probably start by trying to hack analytics.js so that it made its requests to my servers or accepted a parameter for server name, and once I got it doing that, set up the necessary proxy on my servers to respond to those requests by making a request to Google’s servers, and pass the response back to the browser.

Thinking about it, there is another opportunity here, and that would be to filter incoming requests for certain terms (eg urls that have appeared in a spammy way in your referrer traffic previously) and either strip those parts, drop the requests, or temporarily automatically ban the IP.

Potentially you could go further and issue a token at page load that would be required to allow the requested to be forwarded, and you could potentially expire the token after its first use – although this approach may not work or might need refinement in cases where you are recording events more than page loads.

I just wanted to chime in about direct traffic SPAM hits. We’ve seen a spike recently on several sites. They have been less severe on the sites where we require authentication in order to access the site from certain countries.

All of this SPAM makes the GA data very difficult to sift through & make sense of. Not to mention new referral SPAM offenders popping up every couple weeks. There’s almost no way to keep up with them in a filtered view.

You might want to check my other post on referrer spam: http://blog.analytics-toolkit.com/2015/guide-referrer-spam-google-analytics/ . Check solution #2, it should take care of 99% of those hits.