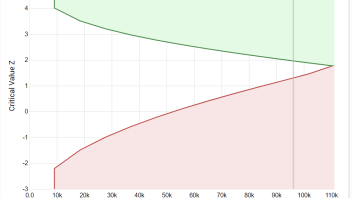

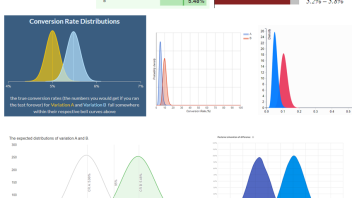

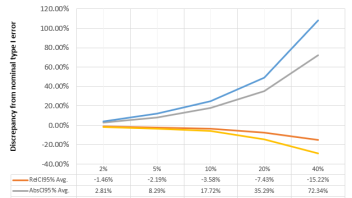

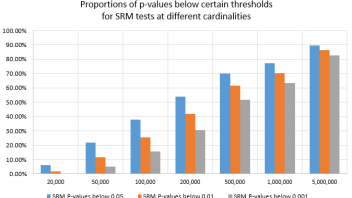

This article will examine the effects of using the HyperLogLog++ (HLL++) cardinality estimation algorithm in applications where its output serves as input for statistical calculations. A prominent example of such a scenario can be found in online controlled experiments (online A/B tests) where key performance measures are often based on the number of unique users, […] Read more…